SEQL athlete discovery app — rebuilding a broken recruiter tool from the ground up

College recruiting is a numbers problem. Out of roughly 8 million high school athletes in the US, fewer than 500,000 will compete at the collegiate level. Recruiters are trying to find the right ones — but the tools available to them were making that harder, not easier.

SEQL had an idea worth building: a recruiter-facing platform with verified athlete data, real search tools, and a direct connection to athlete profiles. The MVP had been built by an external agency and shipped — without user research or usability testing.

When I joined, my brief was to diagnose what was broken and rebuild the experience around what recruiters actually needed.

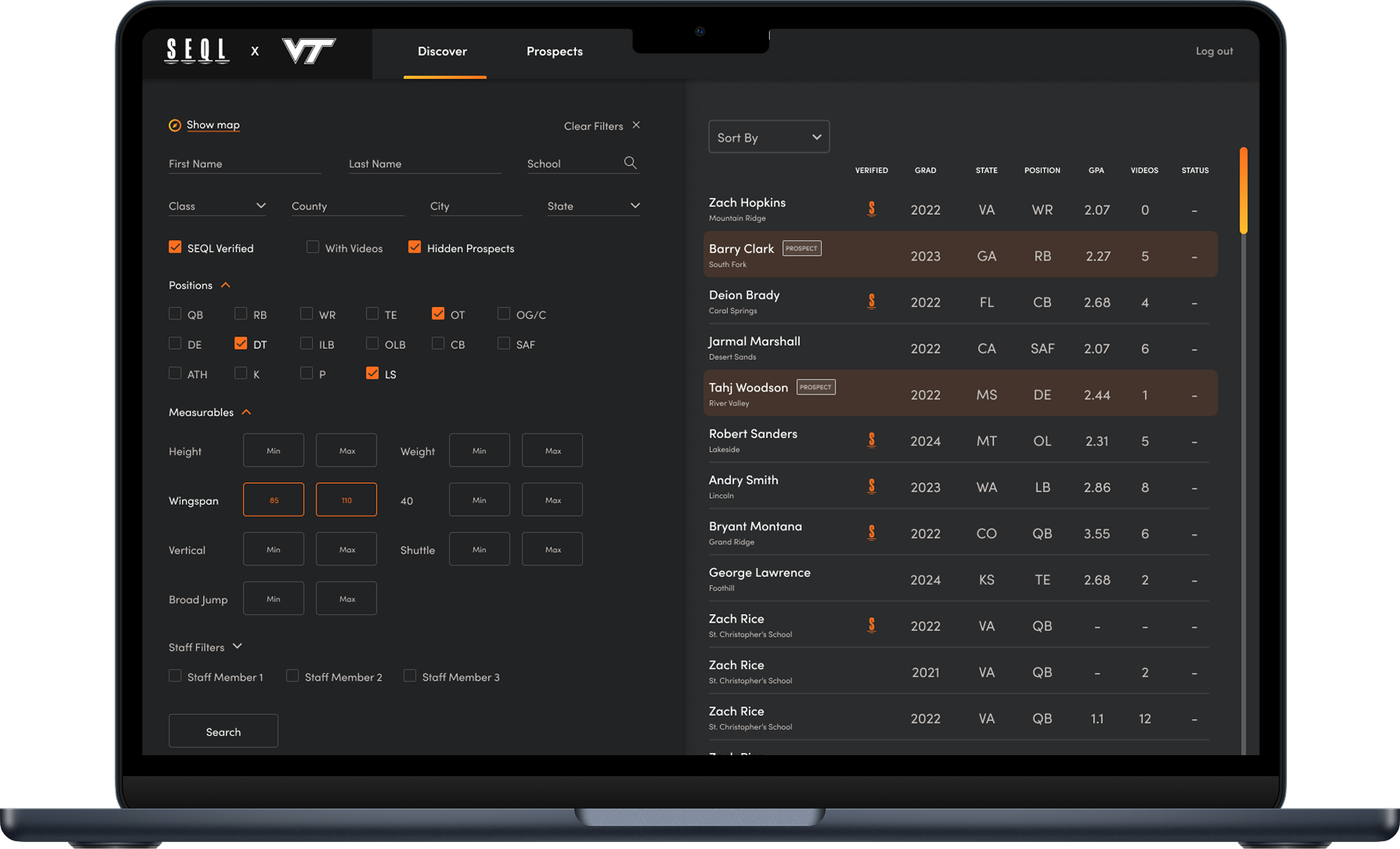

Football, basketball. Larger discretionary budgets. Recruits for one sport only. Often tracking athletes from middle school onward — needs to group and tier prospects by graduation year, position, and degree of interest over a multi-year horizon.

"I'm managing relationships that span years. I need to know exactly where every prospect stands at any point in time."

Football, basketball, baseball. Similar tracking needs to D1 but with less budget. More price-sensitive about platform costs and less tolerant of paying for data they can't verify.

"I can't afford to waste a recruiting visit on someone whose profile turned out to be outdated."

Limited budgets. Often recruits across multiple sports simultaneously. Has unique tracking and filtering needs that existing platforms — built around revenue sport requirements — completely ignore.

"Every tool is built for football. My filters don't even exist on most platforms."

"Athlete-focused programs like BeRecruited prey on families who don't understand the process, and use statistics that aren't verifiable for the recruiter. The emails are typically immediately deleted when received. Kids are spending thousands of dollars a year that, from the college recruiter perspective, are worthless."

— D1 recruiter, user research interview

This quote wasn't an outlier. Across our interviews, the same pattern emerged: recruiters had become deeply skeptical of athlete profile platforms as a category — not just the bad ones, but all of them. That skepticism was the central problem the product had to solve.

In addition to this deeply ingrained mistrust in recruiting products, the existing application that had been built had to be completely replaced.

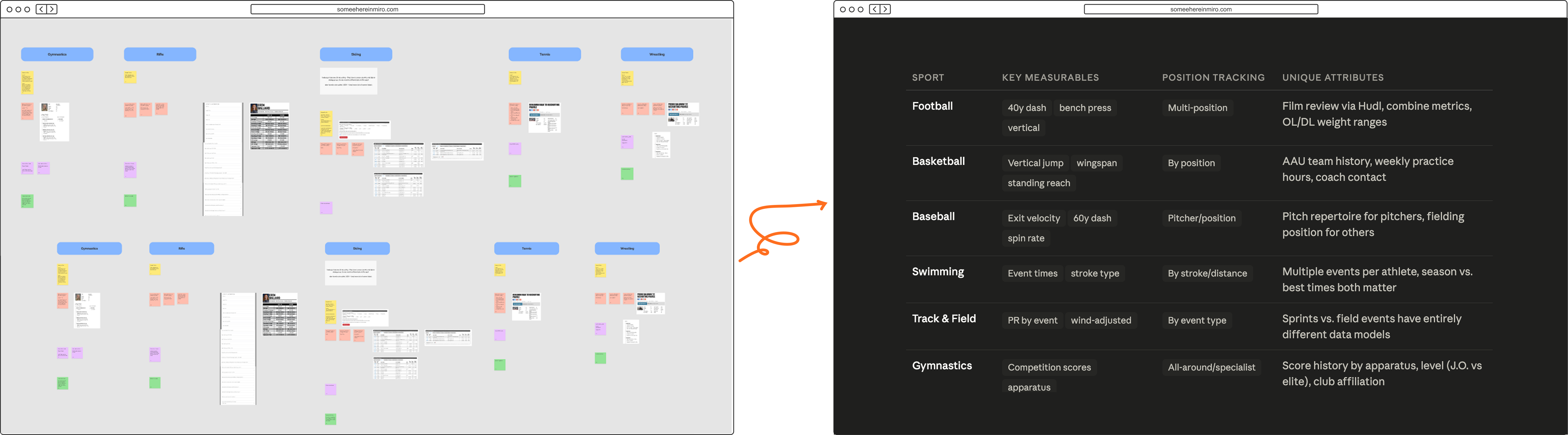

Map-first search and a prominent chat feature both reflect the same fundamental misunderstanding of the recruiting environment. Recruiters search by attributes, not location. And direct recruiter-to-athlete communication outside official channels is NCAA-regulated — making chat not just unhelpful but a feature that couldn't legally ship.

Two different screens, one root cause: the product was designed without recruiter input.

Recruiters track hundreds of students simultaneously across multi-year pipelines. They search by attributes, not names, and need multi-tiered groupings by graduation year, position, and custom attributes.

The existing board couldn't support any of that at scale.

Profiles were user-sourced, rarely updated, and unverified. Recruiters had learned not to rely on what they saw. There was no annotation, no verification layer, no way to request updates — no mechanism to make the data trustworthy over time.

Replace the map default with stat and measurable-driven filtering, sport-specific, as the primary entry point.

If recruiters could flag, annotate, and request verification of athlete data, profiles would improve over time rather than stagnate.

A built-in prospect board organized by priority, position, and graduating year could make SEQL the single place recruiting decisions lived.

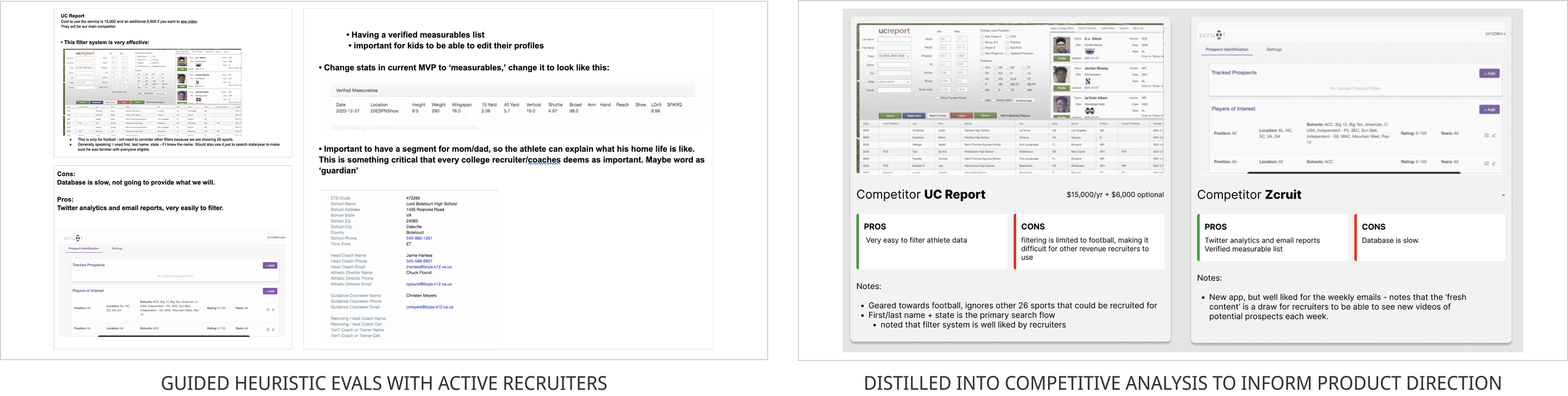

A systematic review of the existing product against usability principles gave the team a shared, documented baseline — rather than competing intuitions about what was broken. It also surfaced the map-first issue explicitly: the default view was oriented around geography when every recruiter we'd spoken to searched by attributes first.

Sessions with active college recruiters focused on their actual workflows — how they currently found and tracked prospects, what they'd tried before, and crucially, what would need to be true for them to trust a platform enough to replace their spreadsheets. The trust findings came directly from these conversations and reshaped the product direction entirely.

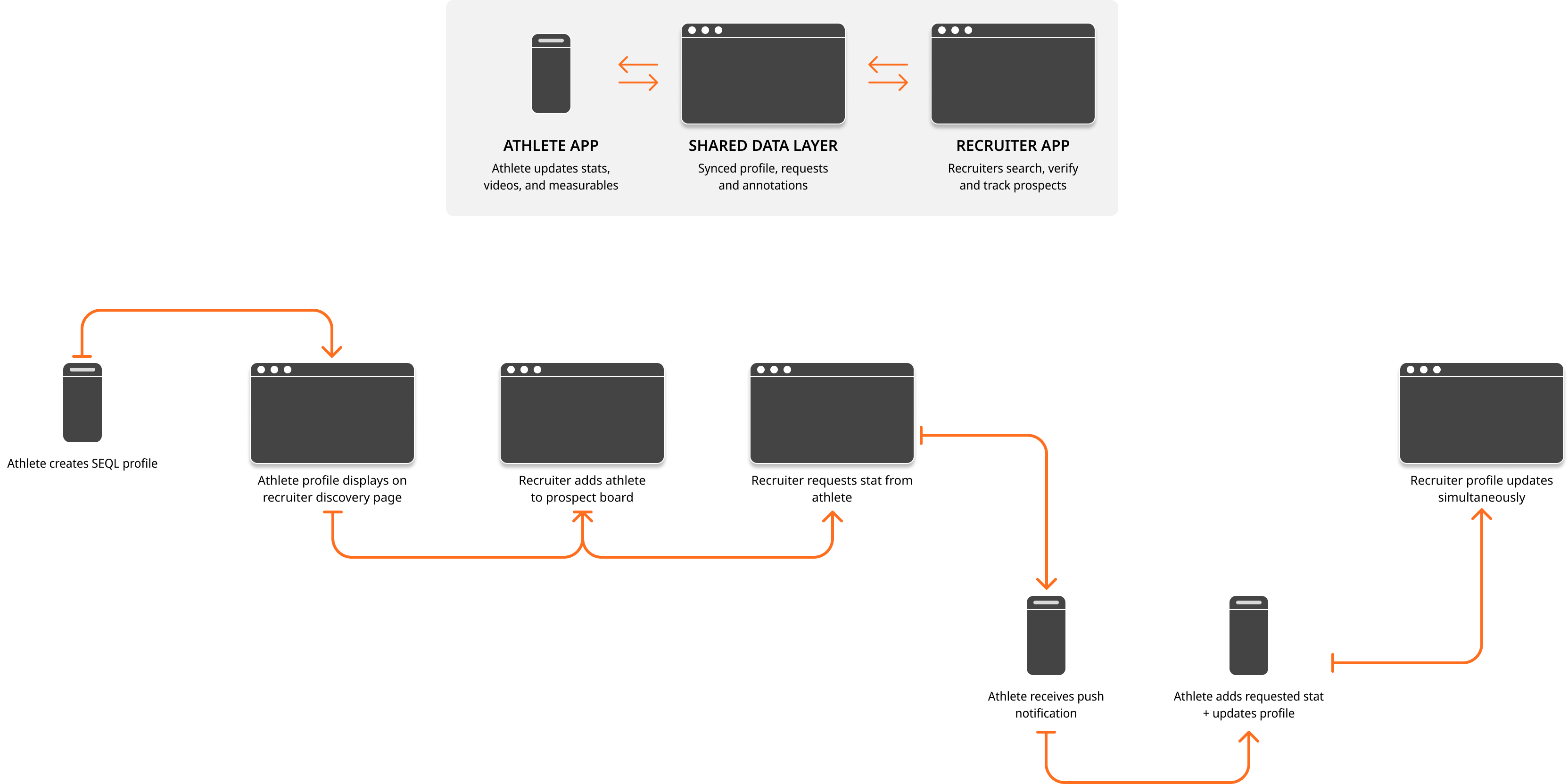

The recruiter platform and the SEQL Athlete App were being designed simultaneously. Decisions I made on the recruiter side — what data fields to surface, how verification requests would work — had direct consequences for what the athlete app had to support, and vice versa. Managing that cross-product dependency shaped how I approached handoff documentation to ensure both sides of the system would work as intended when built.

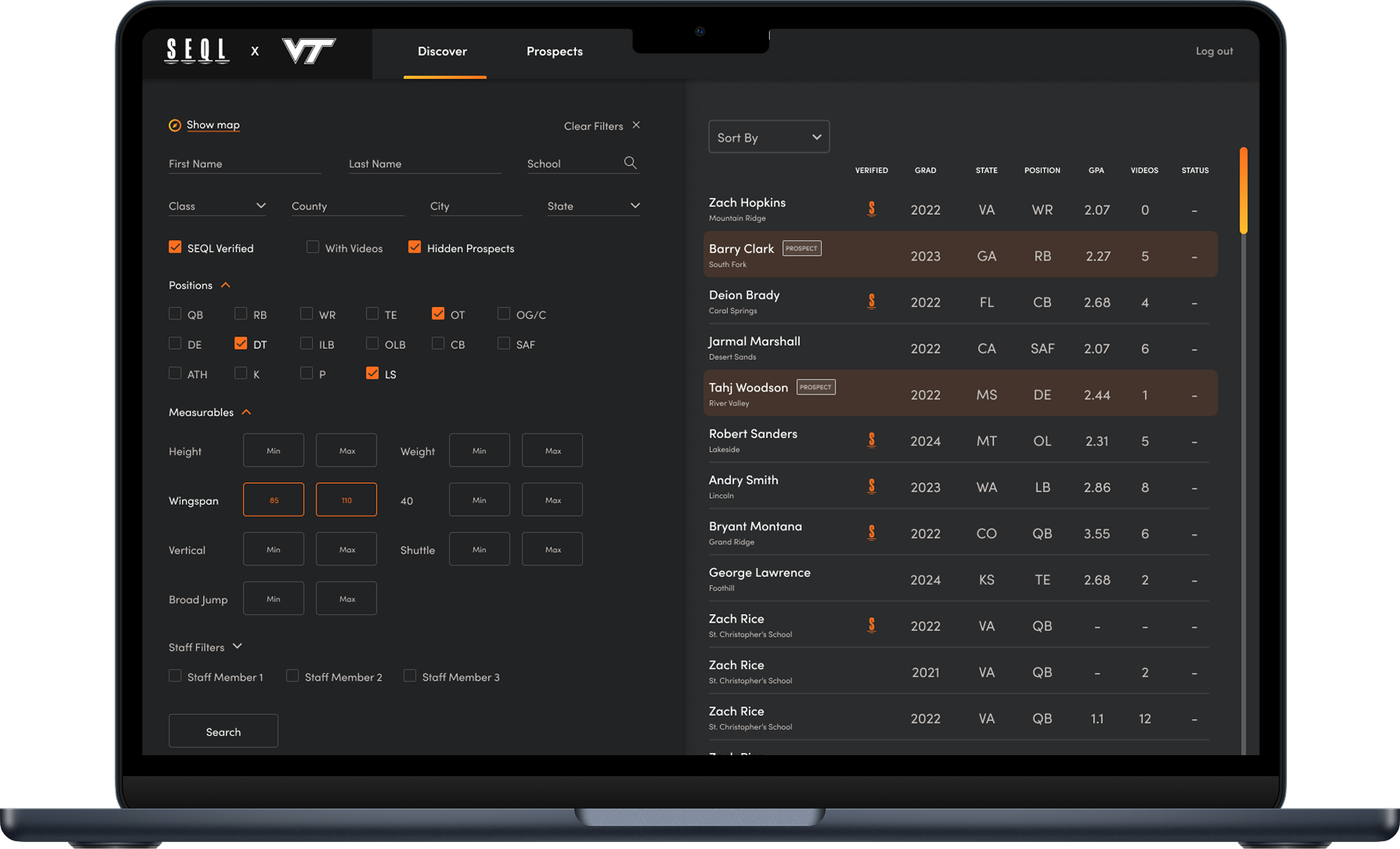

A discovery page with filtering that adapts to the sport — position, height, wingspan, graduating year, and attributes that vary by discipline. A football recruiter's filters look meaningfully different from a diving recruiter's. Generic search was useless for real recruiting decisions; specificity was the whole point.

Rather than treating all athlete data as equivalent, the platform surfaces verified versus unverified information transparently. Recruiters can add notes — particularly useful after scouting events — request verification content, and flag discrepancies. Critically, notes and annotations are shared across recruiter profiles within the same program: Recruiter A and Recruiter B always see the most up-to-date information on any athlete, eliminating the siloed knowledge problem that made spreadsheets necessary in the first place.

Recruiters can:

👉 Turning profiles into collaborative, evolving records

Athlete profiles were often missing the details that mattered most — GPA, transcript records, current measurables like a 40-yard dash time. Rather than leaving recruiters to work around the gaps, the platform lets them request specific missing information directly. A push notification goes to the athlete, nudging them to update their profile. This keeps records accurate and shifts the burden of maintenance off the recruiter without relying on athletes to self-manage proactively.

How it works

👉 This creates a continuous feedback loop instead of static profiles

A prospect board organized by priority level, sport, position, and graduating year — designed to replace the spreadsheet entirely. D1 revenue sport recruiters tracking athletes from middle school onward can now group and filter their full pipeline in one place: all 2024 Top Prospects at Running Back, instantly. No open tabs, no manual spreadsheet updates, no duplicated effort between staff members. Recruiters were often frustrated by incomplete profiles.

The original MVP launched without usability testing — meaning I inherited problems that could have been caught much earlier. I'd advocate harder for lightweight testing at the MVP stage on future projects; fixing structural issues post-launch is substantially more expensive than catching them in a prototype. I'd also push for athlete-side testing earlier in the process. The recruiter experience was only as good as the data feeding it, and we didn't fully pressure-test that dependency until we were already building.